Showing posts with label light field. Show all posts

Showing posts with label light field. Show all posts

Sunday, January 24, 2021

Friday, July 28, 2017

The world's only single-lens Monocentric wide-FOV light field camera.

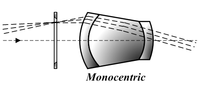

The operative word here is Monocentric

Monocentric

A Monocentric is an achromatic triplet lens with two pieces of crown glass cemented on both sides of a flint glass element. The elements are thick, strongly curved, and their surfaces have a common center giving it the name "monocentric". It was invented by Hugo Adolf Steinheil around 1883. This design, like the solid eyepiece designs of Robert Tolles, Charles S. Hastings, and E. Wilfred Taylor, is free from ghost reflections and gives a bright contrast image, a desirable feature when it was invented (before anti-reflective coatings).

A Wide-Field-of-View Monocentric Light Field Camera

Donald G. Dansereau, Glenn Schuster , Joseph Ford , and Gordon Wetzstein Stanford University, Department of Electrical Engineering

http://www.computationalimaging.org/wp-content/uploads/2017/04/LFMonocentric.pdfAbstract Light field (LF) capture and processing are important in an expanding range of computer vision applications, offering rich textural and depth information and simplification of conventionally complex tasks. Although LF cameras are commercially available, no existing device offers wide field-of-view (FOV) imaging. This is due in part to the limitations of fisheye lenses, for which a fundamentally constrained entrance pupil diameter severely limits depth sensitivity. In this work we describe a novel, compact optical design that couples a monocentric lens with multiple sensors using microlens arrays, allowing LF capture with an unprecedented FOV. Leveraging capabilities of the LF representation, we propose a novel method for efficiently coupling the spherical lens and planar sensors, replacing expensive and bulky fiber bundles. We construct a single sensor LF camera prototype, rotating the sensor relative to a fixed main lens to emulate a wide-FOV multi-sensor scenario. Finally, we describe a processing tool chain, including a convenient spherical LF parameterization, and demonstrate depth estimation and post-capture refocus for indoor and outdoor panoramas with 15 × 15 × 1600 × 200 pixels (72 MPix) and a 138° FOV.

---------

Designing a 4D camera for robots

Stanford engineers have developed a 4D camera with an extra-wide field of view. They believe this camera can be better than current options for close-up robotic vision and augmented reality.

Light field camera, or standard plenoptic camera, works by capturing information about the light field emanating from the scene. It measures the intensity of the light in the scene and also the direction that the light rays travel. Traditional photography only captures the light intensity.

The researchers proudly call their design to be the “first-ever single-lens, wide field of view, light field camera.” The camera uses the information it has gathered about the light at the scene in combination with the 2D image to create the 4D image.

This means the photo can be refocused after the image has been captured. The researchers cleverly use the analogy of the difference between looking out a window and through a peephole to describe the difference between the traditional photography and the new technology. They say, ““A 2D photo is like a peephole because you can’t move your head around to gain more information about depth, translucency or light scattering’. Looking through a window, you can move and, as a result, identify features like shape, transparency and shininess.”

The 4D camera’s unique qualities make it perfect for use with robots. For instance, images captured by a search and rescue robot could be zoomed in and refocused to provide important information to base control. The imagery produced by the 4D camera could also have application in augmented reality as the information rich images could help with better quality rendering.

The 4D camera’s unique qualities make it perfect for use with robots. For instance, images captured by a search and rescue robot could be zoomed in and refocused to provide important information to base control. The imagery produced by the 4D camera could also have application in augmented reality as the information rich images could help with better quality rendering.

The 4D camera is still at a proof-of-concept stage, and too big for any of the future possible applications. But now the technology is at a working stage, smaller and lighter versions can be developed. The researchers explain the motivation for creating a camera specifically for robots. Donald Dansereau, a postdoctoral fellow in electrical engineering explains, “We want to consider what would be the right camera for a robot that drives or delivers packages by air. We’re great at making cameras for humans but do robots need to see the way humans do? Probably not.”

The research will be presented at the computer vision conference, CVPR 2017 on July 23.

First images from the world's only single-lens wide-FOV light field camera.

From CVPR 2017 paper "A Wide-Field-of-View Monocentric Light Field Camera",

http://dgd.vision/Projects/LFMonocentric/

This parallax pan scrolls through a 138-degree, 72-MPix light field captured using our optical prototype. Shifting the virtual camera position over a circular trajectory during the pan reveals the parallax information captured by the LF.

There is no post-processing or alignment between fields, this is the raw light field as measured by the camera.

Other related work:

http://spie.org/newsroom/6666-panoramic-full-frame-imaging-with-monocentric-lenses-and-curved-fiber-bundles

Monocentric lens-based multi-scale optical systems and methods of use US 9256056 B2

Labels:

light field,

Monocentric,

Plenoptic,

wide angle

Sunday, June 18, 2017

Road to the Holodeck: Lightfields and Volumetric VR

It's come and gone, but it looked to be very interesting.

https://www.eventbrite.com/e/road-to-the-holodeck-lightfields-and-volumetric-vr-tickets-34087827610#

What's a lightfield, you ask?

Several technologies are required for VR's holy grail: the fabled holodeck. We already have graphical VR experiences that let us move throughout volumentric spaces, such as video games. And we have photorealistic media that lets us look around a 360 plane from a fixed position (a.k.a. head tracking).

But what about the best of both worlds?

We're talking volumetric spaces in which you can move around, but are also photorealistic. In addition to things like positional tracking and lots of processing horsepower, the heart of this vision is lightfields. They define how photons hit our eyes and render what we see.

Because it's a challenge to capture photorealistic imagery from every possible angle in a given space -- as our eyes do in real reality -- the art of lightfields in VR involves extrapolating many vantage points, once a fixed point is captured. And that requires clever algorithms, processing, and whole lot of data.

Monday, February 08, 2016

LEIA 3D - Light field display - Holographic display module

https://www.leia3d.com/

“There has been very little innovation in the basic physics for making 3-D images since early in the 20th century. This new display is transforming a technology that’s been around for 100 years.” – MIT Tech Review

They have developed an holographic technology that makes it possible to deliver collaborative 3D experiences through an interactive display with no eye wear required.

Every standard LCD display has a component called a backlight. A backlight is comprised of an LED light source and light guide, which directs light toward the display’s pixels. Once light passes through them images appear on the display as the LCD selectively blocks varying amounts of light at each pixel.

LEIA 3D’s technology replaces the standard light guide with a much more sophisticated one that has nanoscale gratings. Our new light guide delivers more control over the direction that light travels before it reaches the pixel array, and can direct a single ray of light to a single given pixel on the display.

This diffractive “multiview backlight” allows the projection of different images in different directions of space with smooth, gradual transitions between views. The result is content that looks 3D from any viewpoint, by any number of viewers, with a seamless sense of parallax—without the breaks, ghost images, or “bad spots” that are commonly experienced in lenticular displays. The visual experience is remarkable and provides a much wider field of view with the ability to update content at video rate.

“There has been very little innovation in the basic physics for making 3-D images since early in the 20th century. This new display is transforming a technology that’s been around for 100 years.” – MIT Tech Review

They have developed an holographic technology that makes it possible to deliver collaborative 3D experiences through an interactive display with no eye wear required.

Every standard LCD display has a component called a backlight. A backlight is comprised of an LED light source and light guide, which directs light toward the display’s pixels. Once light passes through them images appear on the display as the LCD selectively blocks varying amounts of light at each pixel.

LEIA 3D’s technology replaces the standard light guide with a much more sophisticated one that has nanoscale gratings. Our new light guide delivers more control over the direction that light travels before it reaches the pixel array, and can direct a single ray of light to a single given pixel on the display.

This diffractive “multiview backlight” allows the projection of different images in different directions of space with smooth, gradual transitions between views. The result is content that looks 3D from any viewpoint, by any number of viewers, with a seamless sense of parallax—without the breaks, ghost images, or “bad spots” that are commonly experienced in lenticular displays. The visual experience is remarkable and provides a much wider field of view with the ability to update content at video rate.

PRODUCT SPECS:

- 64 views (8×8) full-parallax, field of view (FOV) of 60deg

- 200×200 pixels per view, total LCD resolution 1600×1600

- 8bit monochrome output (color can be adjusted, default is white)

- HDMI video input at 60fps, tiled or swizzled view supported.

- powered directly via USB cable, no battery needed

- Capacitive “Hover-Touch” panel, senses your fingers in proximity to the display and lets you interact with holograms without touching the screen (Haptics not available with this model.)

- Gyro / Accelerometer

Labels:

3D Display,

LEIA 3D,

light field

Sunday, February 07, 2016

Light Field Imaging: The Future of VR-AR-MR

Published on Nov 24, 2015

Presented by the VES Vision Committee. Presentation by Jon Karafin, Head of Light Field Video for Lytro, followed by a Q&A with all presenters moderated by Scott Squires, VES. View Parts 1-3, as well as an amazing 360 video of the Panel with all presenters at

https://www.youtube.com/playlist?list=PLkK8iVS5ZZ7TsRbdPIviKymxlGmf3t9qW

https://www.visualeffectssociety.com/events/event/event-light-field-imaging-future-vr-ar-mr-los-angeles

Event - Light Field Imaging: The Future of VR-AR-MR (Los Angeles)

When:

Tue Nov 17, 2015

6:30pm to 9:30pm

Type:

event

(Image from Light Field Capture device – Photo provided by Lytro)

Light Field Imaging is a technology designed to capture and re-create light rays in a three dimensional scene. It has applications in entertainment, consumer devices, industrial applications and medical imaging. The presentations will cover the latest research in this technology which promises to revolutionize virtual, augmented and mixed reality.

Check out videos of the event at the links below:

https://www.youtube.com/watch?v=Raw-VVmaXbg

https://www.youtube.com/watch?v=ftZd6h-RaHE

https://www.youtube.com/watch?v=0LLHMpbIJNA

https://www.youtube.com/watch?v=_PVok9nUxME

Watch 360 degree video here: https://www.youtube.com/watch?v=FbP9hsdnVmg&list=PLkK8iVS5ZZ7TsRbdPIviKymxlGmf3t9qW&index=5

Principal Speakers:

Paul Debevec, Chief Visual Officer, USC Institute for Creative Technologies, will present the latest technologies being developed at USC ICT on light fields and photoreal virtual actors for Virtual Reality. He will cover the areas of high-resolution face scanning, real-time photoreal digital characters, and light field capture and playback for creating breathtaking realistic and interactive VR content.

Mark Bolas, Director for Mixed Reality Research at USC Institute for Creative Technologies, will describe the MxR Lab and Studio’s recent work on: Discovering Near Field VR Stop Motion with a Touch of Light Fields and a Dash of Redirection which won the Best VR/AR competition at SIGGRAPH 2015.

Jules Urbach, Founder & CEO of OTOY will discuss OTOY’s cutting edge light field rendering toolset and platform.

Jon Karafin, Head of Light Field Video for Lytro, a company which develops light field cameras will discuss light field technologies and their application in visual effects workflows, cinematography and virtual reality as well as the next generation of state-of-the-art capture systems

Moderator: Scott Squires, VES, Academy Tech Award Winning Visual Effects Supervisor and Developer

Presented by the VES Vision Committee. Presentation by Jon Karafin, Head of Light Field Video for Lytro, followed by a Q&A with all presenters moderated by Scott Squires, VES. View Parts 1-3, as well as an amazing 360 video of the Panel with all presenters at

https://www.youtube.com/playlist?list=PLkK8iVS5ZZ7TsRbdPIviKymxlGmf3t9qW

https://www.visualeffectssociety.com/events/event/event-light-field-imaging-future-vr-ar-mr-los-angeles

Event - Light Field Imaging: The Future of VR-AR-MR (Los Angeles)

When:

Tue Nov 17, 2015

6:30pm to 9:30pm

Type:

event

(Image from Light Field Capture device – Photo provided by Lytro)

Light Field Imaging is a technology designed to capture and re-create light rays in a three dimensional scene. It has applications in entertainment, consumer devices, industrial applications and medical imaging. The presentations will cover the latest research in this technology which promises to revolutionize virtual, augmented and mixed reality.

Check out videos of the event at the links below:

https://www.youtube.com/watch?v=Raw-VVmaXbg

https://www.youtube.com/watch?v=ftZd6h-RaHE

https://www.youtube.com/watch?v=0LLHMpbIJNA

https://www.youtube.com/watch?v=_PVok9nUxME

Watch 360 degree video here: https://www.youtube.com/watch?v=FbP9hsdnVmg&list=PLkK8iVS5ZZ7TsRbdPIviKymxlGmf3t9qW&index=5

Principal Speakers:

Paul Debevec, Chief Visual Officer, USC Institute for Creative Technologies, will present the latest technologies being developed at USC ICT on light fields and photoreal virtual actors for Virtual Reality. He will cover the areas of high-resolution face scanning, real-time photoreal digital characters, and light field capture and playback for creating breathtaking realistic and interactive VR content.

Mark Bolas, Director for Mixed Reality Research at USC Institute for Creative Technologies, will describe the MxR Lab and Studio’s recent work on: Discovering Near Field VR Stop Motion with a Touch of Light Fields and a Dash of Redirection which won the Best VR/AR competition at SIGGRAPH 2015.

Jules Urbach, Founder & CEO of OTOY will discuss OTOY’s cutting edge light field rendering toolset and platform.

Jon Karafin, Head of Light Field Video for Lytro, a company which develops light field cameras will discuss light field technologies and their application in visual effects workflows, cinematography and virtual reality as well as the next generation of state-of-the-art capture systems

Moderator: Scott Squires, VES, Academy Tech Award Winning Visual Effects Supervisor and Developer

Labels:

360,

camera,

camera array,

depth camera,

light field

Sunday, June 14, 2015

Future of Adobe Photoshop Demo - GPU Technology Conference - YouTube

Demonstration of turning a light field image into a viewable image using the GPU to accelerate performance.

https://youtu.be/lcwm4yaom4w

http://www.netbooknews.com/9917/futur... There was alot going on during the Day 1 Keynote at Nvidia's GPU conference, as far as consumer are concerned there was one significant demo. The demo involved Adobe showing off a technology being developed using a plenoptic lens aka one of those lenses that looks like a bug eye. By attaching a number of small lenses to a camera, users can take a picture that looks, at first, like something an insect might see which, each displaying a small part of the entire picture. Using an algorithm Adobe invented, the multitudes of little images can be resolved into a single photo. The possibilities are endless on how you can manipulate the photo. 2D image are made 3D & they can adjust the focal point in real time so the background comes into focus and the subject blurry. Fingers crossed this gets into Adobe CS6 or CS7.

https://youtu.be/lcwm4yaom4w

http://www.netbooknews.com/9917/futur... There was alot going on during the Day 1 Keynote at Nvidia's GPU conference, as far as consumer are concerned there was one significant demo. The demo involved Adobe showing off a technology being developed using a plenoptic lens aka one of those lenses that looks like a bug eye. By attaching a number of small lenses to a camera, users can take a picture that looks, at first, like something an insect might see which, each displaying a small part of the entire picture. Using an algorithm Adobe invented, the multitudes of little images can be resolved into a single photo. The possibilities are endless on how you can manipulate the photo. 2D image are made 3D & they can adjust the focal point in real time so the background comes into focus and the subject blurry. Fingers crossed this gets into Adobe CS6 or CS7.

Light Field Cameras for 3D Imaging and Reconstruction | Thoughts from Tom Kurke

This is a excellent article talking about light field / plenoptic cameras, Pelican imaging, Lytro and listing out all the signigicant published papers in this area.

http://3dsolver.com/light-field-cameras-for-3d-imaging/

Labels:

light field,

Lytro,

NVIDIA,

Pelican Imaging,

Plenoptic,

raytrix

Superresolution with Plenoptic Camera 2.0

Excellent paper

Abstract:

This work is based on the plenoptic 2.0 camera, which captures an array of real images focused on the object. We show that this very fact makes it possible to use the camera data with super-resolution techniques, which enables the focused plenoptic camera to achieve high spatial resolution. We derive the conditions under which the focused plenoptic camera can capture radiance data suitable for super resolution. We develop an algorithm for super resolving those images. Experimental results are presented that show a 9× increase in spatial resolution compared to the basic plenoptic 2.0 rendering approach. Categories and Subject Descriptors (according to ACM CCS): I.4.3 [Image Processing and Computer Vision, Imaging Geometry, Super Resolution]:

http://www.tgeorgiev.net/Superres.pdf

Labels:

light field,

Plenoptic,

Super resolution

Image and Depth from a Conventional Camera with a Coded Aperture

Image and Depth from a Conventional Camera with a Coded Aperture

Anat Levin, Rob Fergus, Fredo Durand, Bill Freeman

Abstract

| A conventional camera captures blurred versions of scene information away from the plane of focus. Camera systems have been proposed that allow for recording all-focus images, or for extracting depth, but to record both simultaneously has required more extensive hardware and reduced spatial resolution. We propose a simple modi_cation to a conventional camera that allows for the simultaneous recovery of both (a) high resolution image information and (b) depth information adequate for semi-automatic extraction of a layered depth representation of the image. Our modification is to insert a patterned occluder within the aperture of the camera lens, creating a coded aperture. We introduce a criterion for depth discriminability which we use to design the preferred aperture pattern. Using a statistical model of images, we can recover both depth information and an all-focus image from single photographs taken with the modified camera. A layered depth map is then extracted, requiring user-drawn strokes to clarify layer assignments in some cases. The resulting sharp image and layered depth map can be combined for various photographic applications, including automatic scene segmentation, post-exposure refocussing, or re-rendering of the scene from an alternate viewpoint. |

http://groups.csail.mit.edu/graphics/CodedAperture/

Saturday, June 06, 2015

Computational cameras

Computational Cameras: Convergence of

Optics and Processing

Changyin Zhou, Student Member, IEEE, and Shree K. Nayar, Member, IEEE

Abstract—A computational camera uses a combination of optics and processing to produce images that cannot be captured with traditional cameras. In the last decade, computational imaging has emerged as a vibrant field of research. A wide variety of computational cameras has been demonstrated to encode more useful visual information in the captured images, as compared with conventional cameras. In this paper, we survey computational cameras from two perspectives. First, we present a taxonomy of computational camera designs according to the coding approaches, including object side coding, pupil plane coding, sensor side coding, illumination coding, camera arrays and clusters, and unconventional imaging systems. Second, we use the abstract notion of light field representation as a general tool to describe computational camera designs, where each camera can be formulated as a projection of a high-dimensional light field to a 2-D image sensor. We show how individual optical devices transform light fields and use these transforms to illustrate how different computational camera designs (collections of optical devices) capture and encode useful visual information. Index Terms—Computer vision, imaging, image processing, optics.

http://www1.cs.columbia.edu/CAVE/publications/pdfs/Zhou_TIP11.pdf

Changyin Zhou, Student Member, IEEE, and Shree K. Nayar, Member, IEEE

Abstract—A computational camera uses a combination of optics and processing to produce images that cannot be captured with traditional cameras. In the last decade, computational imaging has emerged as a vibrant field of research. A wide variety of computational cameras has been demonstrated to encode more useful visual information in the captured images, as compared with conventional cameras. In this paper, we survey computational cameras from two perspectives. First, we present a taxonomy of computational camera designs according to the coding approaches, including object side coding, pupil plane coding, sensor side coding, illumination coding, camera arrays and clusters, and unconventional imaging systems. Second, we use the abstract notion of light field representation as a general tool to describe computational camera designs, where each camera can be formulated as a projection of a high-dimensional light field to a 2-D image sensor. We show how individual optical devices transform light fields and use these transforms to illustrate how different computational camera designs (collections of optical devices) capture and encode useful visual information. Index Terms—Computer vision, imaging, image processing, optics.

http://www1.cs.columbia.edu/CAVE/publications/pdfs/Zhou_TIP11.pdf

Saturday, December 27, 2014

Workshop on Light Field Imaging to be held at Stanford on February 12, 2015

Workshop on Light Field Imaging

February 12, 2015

MacKenzie Conference Room, Huang Engineering Center

Stanford University

We invite you to join us on February 12, 2015 at Stanford University to explore the exciting area of research and product development in Light Field Imaging.

The

Workshop on Light Field Imaging will include a summary of the

state-of-the-art research and a glimpse into the future of technologies

designed to capture and create light rays in a three dimensional scene.

Participants will leave with a better understanding of the concept of a

light field as it is used in geometric optics, computer vision, computer

graphics and computational photography. The Workshop will include

talks that summarize recent advances in light field cameras and light

field displays, as well as applications of these technologies in

entertainment, consumer devices, industrial applications and medical

imaging. The Workshop will also include an interactive session with

experts from industry and academics addressing questions about the

killer applications and challenges in product development, new areas for

research and graduate training, and the future of light field imaging.

There will also a technology demo session that will include

presentations by research labs and startup companies.

You can now register for the Workshop on Light Field Imaging that will be held at Stanford on February 12, 2014. Registration is limited to 200 people, and we are rapidly approaching this limit, so you should register now if you intend to participate.

Visit our website to

get updates on the program. A list of companies that

will participating in the Interactive Demo Session will be published in

the coming weeks.

If you would like to receive future announcements about this event, be sure to subscribe to our mailing list at https://mailman.stanford.

Labels:

computational photography,

light field

Thursday, December 04, 2014

Paolo Favaro: Portable Light Field Imaging: Extended Depth of Field, Ali...

From ICCP11 Hosted by Carnegie Mellon University, Robotics Institute

April 8, 2011

Abstract:

Portable light field cameras have demonstrated capabilities beyond conventional cameras. In a single snapshot, they enable digital image refocusing, i.e., the ability to change the camera focus after taking the snapshot, and 3D reconstruction. We show that they also achieve a larger depth of field while maintaining the ability to reconstruct detail at high resolution. More interestingly, we show that their depth of field is essentially inverted compared to regular cameras. Crucial to the success of the light field camera is the way it samples the light field, trading off spatial vs. angular resolution, and how aliasing affects the light field. We present a novel algorithm that estimates a full resolution sharp image and a full resolution depth map from a single input light field image. The algorithm is formulated in a variational framework and it is based on novel image priors designed for light field images. We demonstrate the algorithm on synthetic and real images captured with our own light field camera, and show that it can outperform other computational camera systems.

Bio:

Paolo Favaro received the D.Ing. degree from Universita di Padova, Italy in 1999, and the M.Sc. and Ph.D. degree in electrical engineering from Washington University in St. Louis in 2002 and 2003 respectively. He was a postdoctoral researcher in the computer science department of the University of California, Los Angeles and subsequently in Cambridge University, UK. Dr. Favaro is now lecturer (assistant professor) in Heriot-Watt University and Honorary Fellow at the University of Edinburgh, UK. His research interests are in computer vision, computational photography, machine learning, signal and image processing, estimation theory, inverse problems and variational techniques. He is also a member of the IEEE Society.

Tuesday, December 02, 2014

Intel post promotional videos for its 3D camera-based RealSense

Another Two Video Promotions from Intel

Intel keeps posting promotional videos for its 3D camera-based RealSense technology. The first one shows refocusing capability similar to Lytro, Pelican Imaging and some Nokia products:

second video demos 3D scanning:

http://www.intel.com/content/www/us/en/architecture-and-technology/realsense-overview.html

https://software.intel.com/en-us/realsense/home Intel® RealSense™ Developer Kit.

Intel keeps posting promotional videos for its 3D camera-based RealSense technology. The first one shows refocusing capability similar to Lytro, Pelican Imaging and some Nokia products:

second video demos 3D scanning:

http://www.intel.com/content/www/us/en/architecture-and-technology/realsense-overview.html

https://software.intel.com/en-us/realsense/home Intel® RealSense™ Developer Kit.

Labels:

3D Camera,

depth camera,

Intel,

light field,

RealSense

Sunday, November 23, 2014

Sunday, November 09, 2014

Thursday, November 06, 2014

Lytro Hits Up The Enterprise With The Introduction Of The Lytro Platform And Dev Kit

https://www.lytro.com/files/LDK_Data_Sheet.pdf

https://www.lytro.com/platform/

From: http://techcrunch.com/2014/11/06/lytro-pivots-to-hit-up-the-enterprise-with-the-introduction-of-the-lytro-platform-and-dev-kit/

Lytro has been working for three years to build a brand new type of camera with light field technology, and while the tech itself is quite incredible, transforming that into a viable business has proven difficult.

Until now, the company has been selling special cameras, the original Lytro and the newer,photographer-friendly Illum. It’s a difficult business that is in fast flux, given that so many entry-level photographers now have a camera in their smartphone and more intensive photographers want a proper DSLR.

And so, Lytro is adding a new revenue stream to its business with the launch of the Lytro Development Kit. For now, it’s a software development kit that comes with an API for integrating light field technology into applications in a number of imaging fields, including holography, microscopy, architecture, and security.

Lytro’s light field sensor takes into account the direction that light is traveling relative to the shot, rather than capture light on a single plane. This, paired with Lytro’s software, allows for image refocusing post-shot, among other dimension-based features. With the LDK, Lytro is looking to open up that functionality to other field and businesses, with a revenue stream coming from the enterprise side.

Alongside access to the API and the Lytro processing engine, the company will also be working alongside partners to develop custom devices and photography hardware to accomplish their specific, industry-based goals.

With the launch, Lytro has announced four major partnerships with organizations already on the platform, including NASA’s Jet Propulsion Laboratory, a medical devices startup called General Sensing, the Army Night Vision and Electronic Sensors Directorate, and an unnamed “industrial partner” applying the tech to work with nuclear reactors.

Pricing starts at $20,000 for access to the platform. You can learn more here.

Saturday, July 12, 2014

Flexible Organic Image sensor company ISORG just raised 6.4M Euro.

Bpifrance, Sofimac CEA Investissement Partners, and unnamed angel investors, participate in ISORG financing round totaling 6.4M euros. The new funds will enable ISORG, which already operates a pre-industrial pilot line in Grenoble, build a new production line to start volume manufacturing in 2015, and deploy internationally.

The French company ISORG is the abbreviation of Image Sensor ORGanic.

ISORG is the pioneer company in organic and printed electronics for large area photonics and image sensors.

ISORG gives vision to all surfaces with his disruptive technology converting plastic and glass into a smart surface able to see.

ISORG vision is to become the leader company for opto-electronics systems in printed electronics, developing and mass manufacturing large area optical sensors for the medical, industrial and consumer markets.

Imagine arrays of small low res cameras covering the walls like wall paper.

It would be a reverse holodeck allowing for 360 deg light-field camera imaging.

http://www.isorg.fr/

http://www.isorg.fr/actualites/0/bpifrance-sofimac-partners-et-cea-investissement-participent-a-la-levee-de-fonds-de-isorg_236.html

Plastic Logic 96 x 96 pixel image sensor

http://www.isorg.fr/

http://www.isorg.fr/actualites/0/bpifrance-sofimac-partners-et-cea-investissement-participent-a-la-levee-de-fonds-de-isorg_236.html

Labels:

camera,

ISORG,

light field,

Organic

Tuesday, July 01, 2014

LightField Video

What is the Light Field?

LightField Definition:

The LightField is defined as all the lightrays at every point in space travelling in every direction. It is essentially 4D data, because every point in three-dimensional space is also attributed a direction (the fourth dimension). The concept of the LightField was invented in the 1990's to solve common problems in computer graphics and vision.

The LightField is defined as all the lightrays at every point in space travelling in every direction. It is essentially 4D data, because every point in three-dimensional space is also attributed a direction (the fourth dimension). The concept of the LightField was invented in the 1990's to solve common problems in computer graphics and vision.

How Does LightField Photography Work?

Traditional cameras – analog or digital – only record a two-dimensional representation of a scene, using the two available dimensions (length and width; pixels along the x and y axis) of the film/sensor.

Contrary to that, LightField cameras (also called plenoptic cameras) have a microlense array just in front of the imaging sensor. Such arrays consist of many microscopic lenses (often in the range of 100,000) with tiny focal lengths (as low as 0.15 mm), and split up what would have become a 2D-pixel into individual light rays just before reaching the sensor. The resulting raw image is a composition of as many tiny images as there are microlenses.

Here’s the fascinating part: every sub-image differs a little bit from its neighbors, because the lightrays were diverted slightly differently depending on the corresponding microlense’s position in the array.

Next, sophisticated software is used to find matching lightrays across all these images. Once it has collected a list of (1) matching lightrays, (2) their position in the microlense array and (3) within the sub-image, the information can be used to reconstruct a sharp 3D model of the scene.

Using this model, you have all of the LightField capabilities at your fingertips: you can define what parts of the image should be in focus or out of focus, define the depth of field, you can set everything in focus, you can shift the perspective or parallax a bit, … You can even use the parallax data to create 3D pictures from a single LightField lense and capture.

All of this can be done after you’ve recorded the image.

Traditional cameras – analog or digital – only record a two-dimensional representation of a scene, using the two available dimensions (length and width; pixels along the x and y axis) of the film/sensor.

Contrary to that, LightField cameras (also called plenoptic cameras) have a microlense array just in front of the imaging sensor. Such arrays consist of many microscopic lenses (often in the range of 100,000) with tiny focal lengths (as low as 0.15 mm), and split up what would have become a 2D-pixel into individual light rays just before reaching the sensor. The resulting raw image is a composition of as many tiny images as there are microlenses.

Here’s the fascinating part: every sub-image differs a little bit from its neighbors, because the lightrays were diverted slightly differently depending on the corresponding microlense’s position in the array.

Next, sophisticated software is used to find matching lightrays across all these images. Once it has collected a list of (1) matching lightrays, (2) their position in the microlense array and (3) within the sub-image, the information can be used to reconstruct a sharp 3D model of the scene.

Using this model, you have all of the LightField capabilities at your fingertips: you can define what parts of the image should be in focus or out of focus, define the depth of field, you can set everything in focus, you can shift the perspective or parallax a bit, … You can even use the parallax data to create 3D pictures from a single LightField lense and capture.

All of this can be done after you’ve recorded the image.

This is some of my raw notes, please excuse the lack of explanations.

http://lightfield-forum.com/en/

LightField Video

*http://www.osiris-project.eu/uploads/presentations/realtime3dlightfieldtransmission_holografika.pdf*http://www.videotechnology.com/lightfield/realtime3dlightfieldtransmission_holografika.pdf

Light Field Cameras

Lytro cameraLight Field Displays

http://www.muscade.eu/newsletters/December10.pdfHoloVizio

Holografika http://www.holografika.com

http://www.youtube.com/watch?v=rPWFzq7C-v4

http://www.youtube.com/watch?v=BVJA6MQKqCA

http://www.youtube.com/watch?v=vyyvfE9Zm7A

3d Hologram Korea

http://www.grainandpixel.com/http://www.holovision.com/holovision-technology-for-3d-display-without-special-eyewear.html

Formats

http://en.wikipedia.org/wiki/Free_viewpoint_televisionMultiview & 3D formats

http://en.wikipedia.org/wiki/Multiview_Video_Codinghttp://www.iis.fraunhofer.de/en/bf/bsy/fue/bild/3D/

http://www.hhi.fraunhofer.de/en/departments/image-processing/applications/multiview-generation-for-3d-digital-signage/

http://www.hhi.fraunhofer.de/en/departments/image-processing/applications/svc-multiview-video-coding-over-dvb-t2/

Labels:

Freeviewpoint,

Lenticular,

light field,

multiview

Sony Lightfield Camera Application Promises Full Resolution Stereo Imaging

Lightfield-Forum noticed Sony patent application on lightfield camera that maintains the full image sensor resolution while providing stereo imaging. The 73-page, 43-figure US20140071244 application "Solid-state image pickup device and camera system" by Isao Hirota proposes dual level microlens and two-sided image sensor with 45-deg rotated pixels to achieve their promise:

Patent abstract:

There are provided a solid-state image pickup device and a camera system that include no useless pixel arrangement and are capable of suppressing decrease in resolution caused by adopting stereo function. A pixel array section including a plurality of pixels arranged in an array is included. Each of the plurality of pixels has a photoelectric conversion function. Each of the plurality of pixels in the pixel array section includes a first pixel section and a second pixel section. The first pixel section includes at least a light receiving function. The second pixel section includes at least a function to detect electric charge that has been subjected to photoelectric conversion. The first and second pixel sections are formed in a laminated state. Further, the first pixel section is formed to have an arrangement in a state shifted in a direction different from first and second directions that are used as references. The second direction is orthogonal to the first direction. The second pixel section is formed in a square arrangement along the first direction and the second direction orthogonal to the first direction.

For more information, check out the full patent details here: Patent US20140071244 – Solid-state image pickup device and camera system

Labels:

3D Camera,

depth camera,

Lenticular,

light field

Subscribe to:

Comments (Atom)